HuggingFace Inference Endpoints

-

Inference Endpoints

-

Inference Endpoints offers a secure production solution to easily deploy any Transformers, Sentence-Transformers and Diffusers models from the Hub on dedicated and autoscaling infrastructure managed by Hugging Face.

-

A Hugging Face Endpoint is built from a Hugging Face Model Repository.

-

Create custom Inference Handler

-

Hugging Face Endpoints supports all of the Transformers and Sentence-Transformers tasks and can support custom tasks.

The customization can be done through a

handler.py file in your model repository on the Hugging Face Hub.

class EndPointHandlerBaseConfig:

( ... )

class EndpointHandler(EndPointHandlerBaseConfig):

def __init__(self, path=""):

self.controlnet = ControlNetModel.from_pretrained(

self.controlnet_model,

torch_dtype=torch.float16,

use_safetensor=True,

variant="fp16",

device=DEVICE,

)

self.pipeline = StableDiffusionXLControlNetPipeline.from_pretrained(

self.diffusion_model,

controlnet=self.controlnet,

torch_dtype=torch.float16,

variant="fp16",

use_safetensors=True,

device=DEVICE,

)

self.pipeline.enable_model_cpu_offload()

self.pipeline.load_lora_weights(self.lora_model)

self.pipeline.fuse_lora(lora_scale=self.lora_scale)

self.inpaint_pipeline = StableDiffusionXLInpaintPipeline.from_pretrained(

self.inpainting_model,

torch_dtype=torch.float16,

variant="fp16",

use_safetensors=True,

device=DEVICE,

)

self.inpaint_pipeline.enable_model_cpu_offload()

self.inpaint_pipeline.load_lora_weights(self.lora_model)

self.inpaint_pipeline.fuse_lora(lora_scale=self.lora_scale)

def __call__(self, data):

try:

( Processing... )

return {"res": img_base64, "error": None}

except:

return {"res": None, "error": traceback.format_exc()}

Inference Endpoints (dedicated)

-

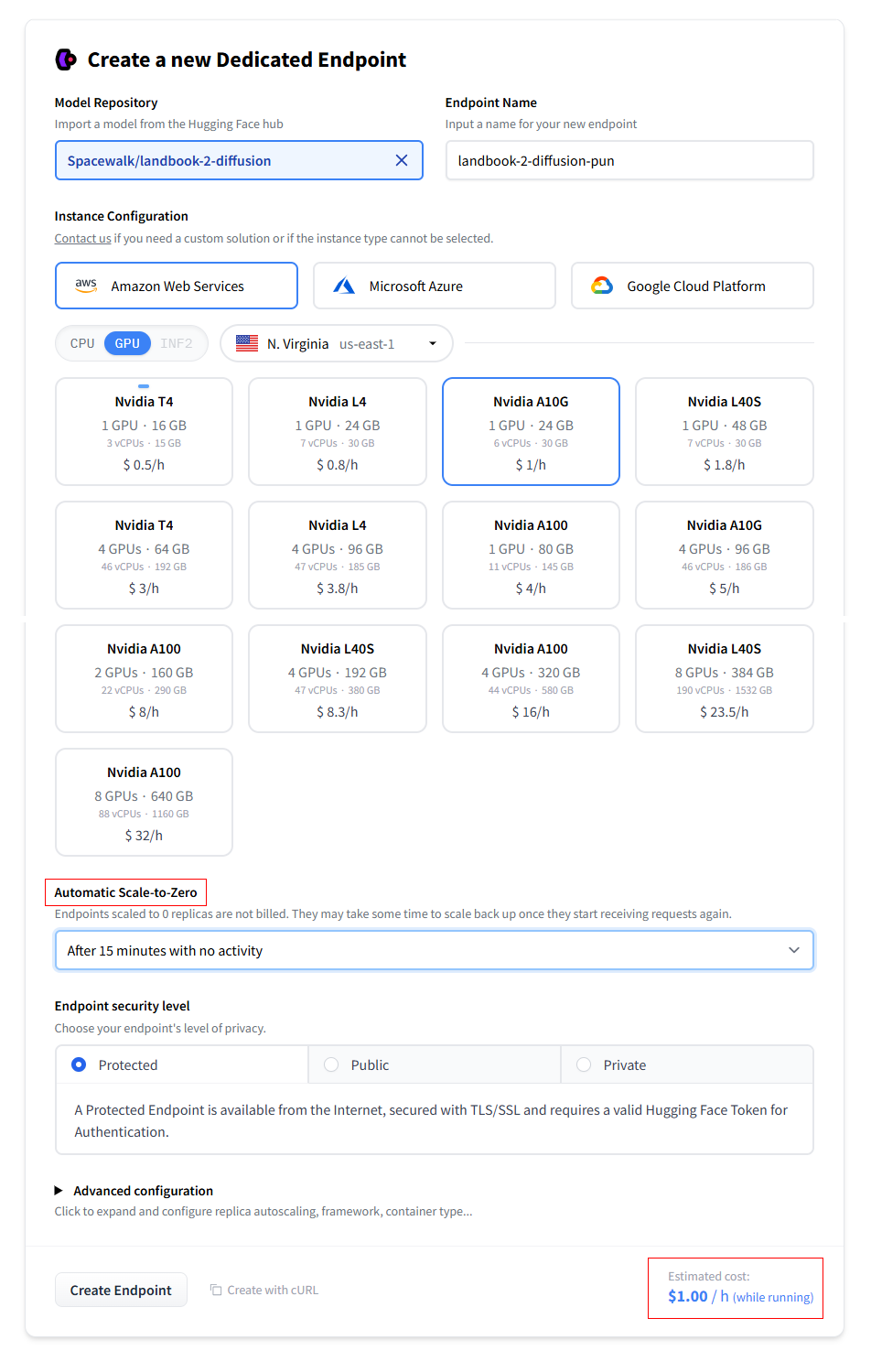

Creating Endpoint

-

Automatic Scale-to-Zero: You can configure your Endpoint to scale to zero GPUs/CPUs after a certain amount of time. Scaled-to-zero Endpoints are not billed anymore.

(restarting the Endpoint requires the model to be re-loaded into memory)

-

Endpoint Security Level: he standard security level is Protected, which requires an authorized HF token for accessing the Endpoint.

Public Endpoints are accessible by anyone without token authentification.

Create a new Dedicated Endpoint

Create a new Dedicated Endpoint

-

Deploying Endpoint

-

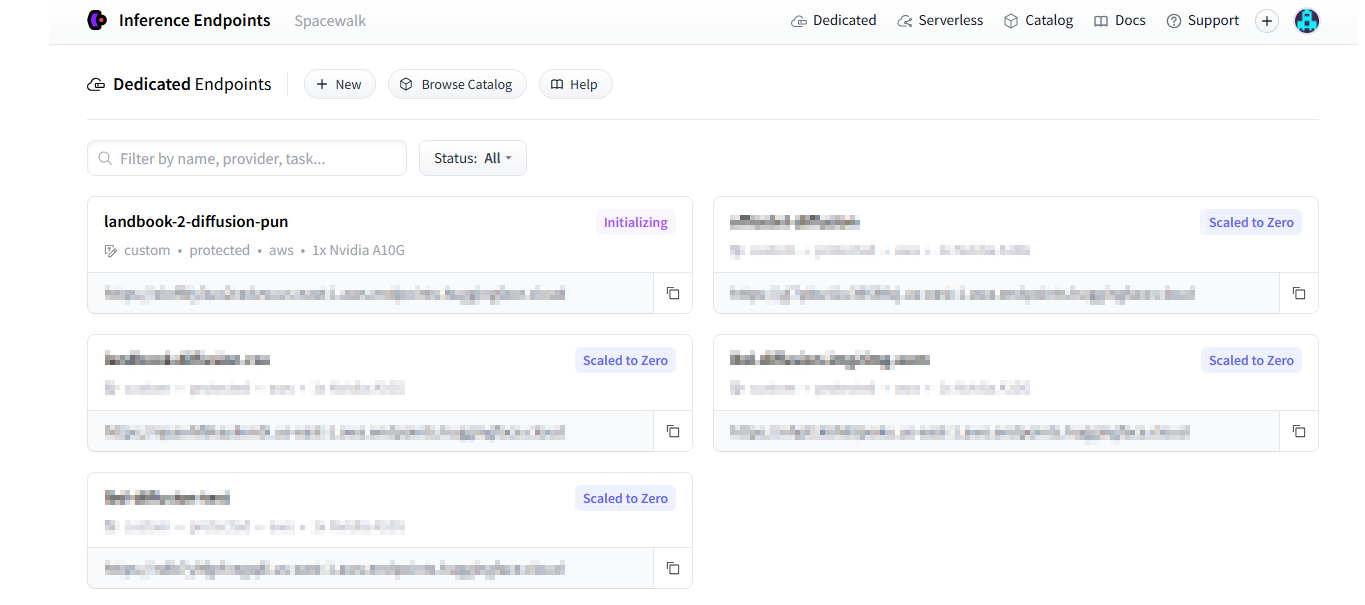

After successful endpoint creation, the inference endpoint is initialized as Fig 2.

-

The Endpoint has

Initializing, Running, and Scaled to Zero status.

Initializing Endpoint

Initializing Endpoint